A few months ago, I found myself watching Kara Swisher interview herself.

Not metaphorically. Literally.

On a monitor in our studio sat two versions of Kara: the real Kara, leaning forward with her trademark skepticism, and an AI version of Kara responding in real time—occasionally with the same dry humor and sharp edge that has defined her journalism career for decades.

At one point, AI Kara started pushing questions back at her.

That was the moment the room relaxed a little. It suddenly felt less like a technology demo and more like… Kara.

The episode, airing May 16th as part of CNN’s Kara Swisher Wants to Live Forever, explores different approaches to aging and longevity. Some are medical . Some are lifestyle changes. Ours was something stranger and harder to categorize.

What does it mean to preserve not just information about a person, but the experience of talking to them?

That question has quietly shaped most of my career at StoryFile. Long before the recent explosion of generative AI, StoryFile was building interactive interviews with Holocaust survivors, veterans, civil rights leaders, and historical witnesses. The goal was never to create fictional simulations. It was to preserve lived experience in a form people could actively engage with.

At museums like The National WWII Museum in New Orlean, visitors sit down and ask questions naturally, in their own words, to members of the WWII generation. Sometimes children ask unexpectedly direct questions. Sometimes veterans ask questions nobody else would think to ask. Sometimes visitors simply want to know what someone missed most about home.

The answers are often imperfect. Emotional. Occasionally contradictory. Which is exactly why they matter. I think people misunderstand what makes conversations feel human. It is not perfect grammar or photorealistic rendering. It is specificity. Hesitation. Humor. Personal memory. The tiny details someone carries for decades because they actually lived them.

Modern AI is incredibly good at producing plausible language. But plausibility is different from experience. Most generative large language models are trained on millions of internet pages, news articles, and books. If you ask ChatGPT to tell the story of a veteran landing at D-Day, you will get an amalgamation of many text sources found on the internet both real and fictional - similar to how “Saving Private Ryan” was inspired by real-events but was not a single lived experience. A generative model can summarize what D-Day was. It cannot remember what the water smelled like stepping off the landing craft.

That distinction became very important while building Kara’s StoryFile.

[wrap-right]

The Pre-interview: Building the Digital Kara

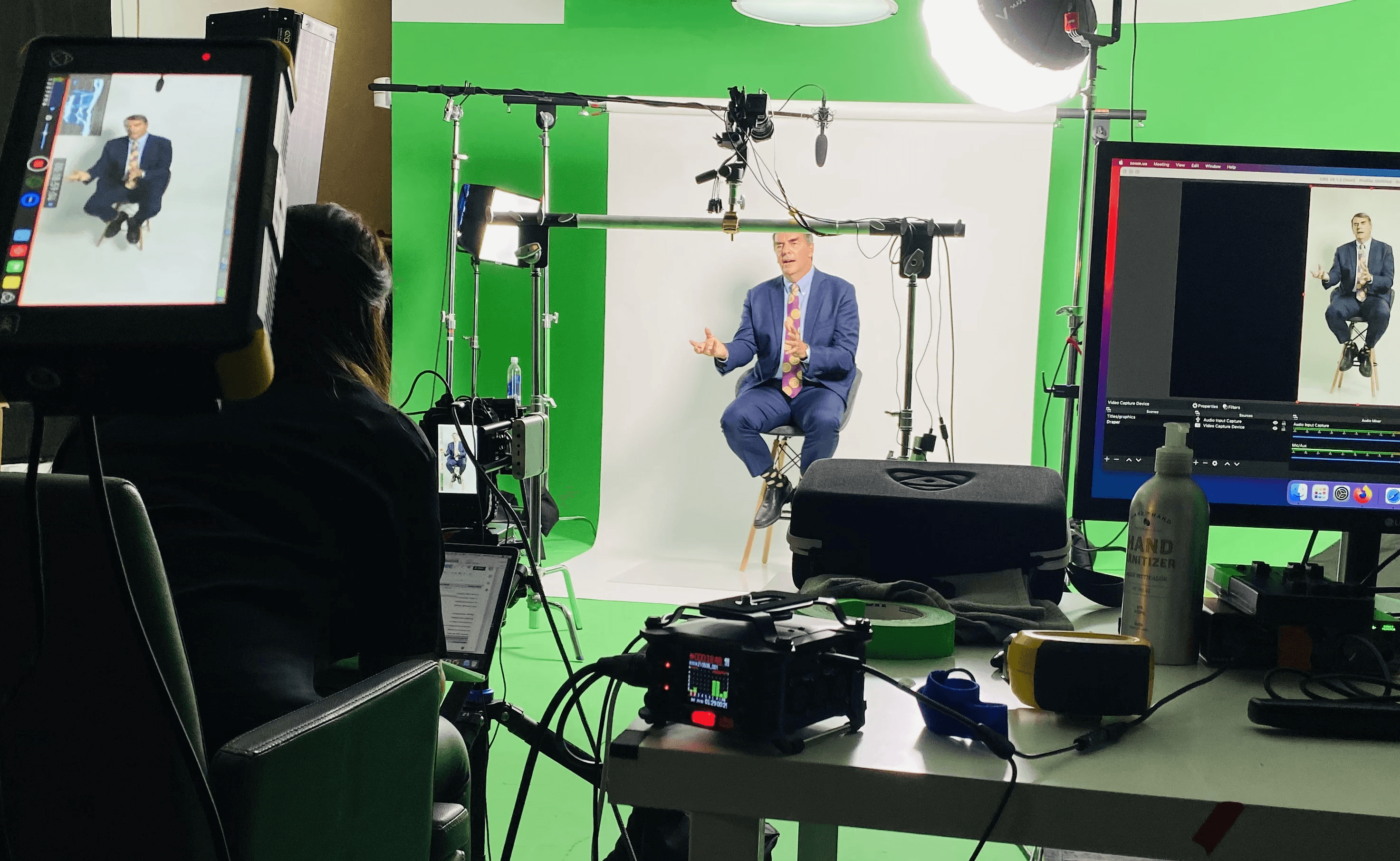

Kara’s interview was intentionally compressed for the documentary. We recorded about two hours of conversation with a professional interviewer, covering everything from her childhood and early reporting career to philosophy, technology, mortality, and Silicon Valley culture. In the end, we captured 77 responses and just under an hour of usable interview material. Normally our productions are far longer. Some interviews span multiple days and thousands of questions. But even in this shorter session, you could already see something emerge that felt recognizably personal.

Not because of the AI. Because of Kara.

The AI only worked because there was a real person underneath it. That sounds obvious, but it cuts against much of the current conversation around artificial intelligence. Right now, there is enormous excitement around generating human likeness from tiny amounts of material—a few photos, a voice sample, maybe some social media posts. And honestly, the visual side is becoming relatively easy. The hard part is memory.

The hard part is understanding how someone thinks about their childhood fifty years later. Or how grief changes the way they tell a story. Or which moments they repeat because those moments quietly defined them. That is why interviewing matters so much. A good interview is not data collection. It is an excavation. The interview video was then cut up into individual clips - each a different story. At the core of StoryFile’s approach is a simple premise: visitors can ask natural, open-ended questions and the AI engine identifies the best video drawn directly from the source in real-time. This works because a good story can answer dozens of different questions and each response is 100% Kara. In practical terms, the AI was not inventing Kara from scratch. It was trying to stay grounded in the real Kara as much as possible.

[wrap-right]

The Conversation

The moment of truth was when Kara Swisher returned to turn her razor-sharp wit and penetrating questions on herself - as she met her own digital doppelganger face to face. The digital Kara was shown life-size on our latest HoloGlass transparent display, so they felt like they were in the room together. For questions about her father and childhood, the StoryFile AI returned authentic responses from her original interview. However, the digital Kara did not just repeat herself. The Lookalike generative AI dynamically adapted to the conversation, maintaining natural flow and handling unexpected phrasing or follow-up questions. Lookalike created new real-time video responses that incorporated relevant details from her articles, podcasts, and in particular her autobiography, “Burn Book: A Tech Love Story.” The AI prompt was designed to imitate Kara’s blunt, funny, informed style, be concise and conversational, and occasionally ask its own occasional probing, challenging questions. This integration allowed both Karas to engage in a deeper two-way debate, challenging each other on the inspiring potential and dangers of realistic digital humans.

Some members of the documentary crew had already seen video of the digital Kara, but Kara was seeing her digital doppelganger for the first time on camera. The crew were surprised how easily the AI was able to capture her voice, style, and humor. However, having your AI double talk back to you is a much higher bar - and can feel a little weird. You always know yourself the best, how you would respond to each question and can spot any factual inconsistencies or missing facial expressions,

So what did Kara react to her digital alter-ego? First, she thought her AI twin should smile more. The ability of generative video to incorporate non-verbal facial expressions and gestures is rapidly improving. Currently the digital Kara only processed text input from the real Kara’s question. By adding a camera, the AI could start to recognize non-verbal input, understand social cues and the context of each response, and then choose the appropriate tone - whether to smile, cry, frown or get angry.

Kara’s second criticism was that the digital AI was too compliant and submissive. At one point, Kara suggested that her digital double might need to be deleted, to be “killed” because artificial intelligence technology could become too dangerous. The Kara AI acknowledged these points and couldn’t really object. It seems natural that a digital version of yourself would share all your opinions but should it stand up to defend its own existence.

Most AI agents are trained as digital assistants to help write an email, provide navigation directions, or summarize complex documents. They do not have their goals and dreams, beyond simply completing tasks on your behalf. This is often why they are so compliant. On the other hand, most real-conversations represent the intersection of human goals. Each person might have a different aim: negotiating a deal, teaching a difficult concept or just building friendships and making someone laugh. This leads to much more complicated and interesting conversations.

Real human connections are made by recognizing shared experiences and common interests, then highlighting in the conversation. In a previous episode entitled “How to hack loneliness”, Kara created a digital companion or “replika” based on a fictional picture and personality. The conversation with this replica was shallow and simplistic because the AI lacked any real-world interests or memories. StoryFile addresses this by tying each conversation back to real-world stories.

Authenticity is not just a product feature. It is a responsibility.

In museums and educational settings, we are extremely cautious about synthetic generation. If someone asks a Holocaust survivor about a concentration camp, or asks a veteran about combat, the response should come from something they actually said—not an AI approximation of what they might have said. That grounding matters ethically as much as technically.

Malicious deep fakes can undermine trust by blurring the line between reality and fabrication, causing people to doubt authentic media and believe false information. As AI-generated videos and audio become more realistic, they empower disinformation, damage reputations, and enable the "liar's dividend"—where real, incriminating evidence is dismissed as fake. For our hybrid avatars like Kara, that combine real and synthetic responses, it is critical that we clearly label each video and establish provenance. Either we identify when each video was recorded and linking back to the original source material or we identify the supplement documents and AI models used to generate new responses.

Making this technology accessible to all

One final recurring theme in the CNN documentary is the question of accessibility. Should longevity technology be only available to the privileged few who can afford it, high-end spa treatments, surgical procedures, or expensive medical scans. Not every family can spend days in a studio with a professional crew. Most people do not have autobiographies. Most people do not even know where to begin when telling their life story. That realization has pushed us toward a new idea internally: the AI biographer. Instead of asking someone to “document their life,” we are developing a system that guides them gently through memory and storytelling. It can gather questions from children, siblings, or friends. It can notice gaps. It can encourage reflection. It can help someone move beyond surface facts into the stories that actually define a person.

Previous generations left us photographs, letters, and occasional home videos. Future generations may inherit conversations. They may hear stories in someone’s own voice. They may ask their grandparents questions long after they are gone. Twenty years from now, a child will experience being able to talk to her great grandmother for the first time, because their ancestor recorded a StoryFile. Not because AI replaced a human being, but because it helped preserve the parts of being human that matter most: finding shared memories across time.

Final Thoughts

Working on the Kara project also made me think about why interactivity changes emotional connection so dramatically. Traditional documentaries are beautifully crafted, but they are ultimately linear. The audience follows the path chosen by the filmmaker. Interactive conversation changes the balance completely. People become active participants. They ask the questions they care about. They interrupt. They redirect. They get curious. They remember more details. The conversation becomes partially theirs. That may sound subtle, but it changes how people learn and how they emotionally connect.

I have watched children ask questions in museums that no documentary producer would ever script. I have watched visitors cry after hearing an answer that unexpectedly mirrored their own family experience. I have watched veterans sit quietly for twenty minutes after talking to another veteran from a different war. Those moments do not come from technology alone. They come from recognition. From seeing part of yourself reflected in another person’s lived experience. Which brings me back to the question Kara’s series asks: can technology help us live forever?

I do not think AI creates immortality. But I do think it changes what we are able to leave behind.

Which path is right for you?

If you watched the show and wondered how you can use this technology, we’ve made it accessible in two ways: